PROJECT MANAGER:

Dr P H J Kelly

Dr P H J Kelly

Department of Computing

Imperial College of Science, Technology and Medicine

180 Queen's Gate

London

SW7 2BZ

Telephone: 0171 594 8332

e-mail: phjk@doc.ic.ac.uk

OTHER PARTICIPANTS:

ICL Ltd (contacts: Ian Colloff, Jeff Poskett, Owen Evans)

Lovelace Road

Bracknell

Berkshire

RG12 8SN

Telephone: 01344 424842

GRANT NUMBER: GR/J/99117 START DATE: 15/6/94 DURATION: three years AMOUNT: Ł137,488

PROJECT OBJECTIVES

- To study the design of general-purpose shared-memory parallel computers

- To design data management protocols and policies with high average performance and reasonable worst-case performance

- To evaluate them using benchmark programs, and to establish analytical bounds on the performance characteristics of different designs

PROGRESS/DELIVERABLES (AS AT 1/3/97)

- In collaboration with an ex-RA now at Sun Microsystems, we have developed software (called ALITE) to simulate various shared-memory architectures.

- We have developed a novel class of cache coherency protocols, based on the idea of ``proxies''.

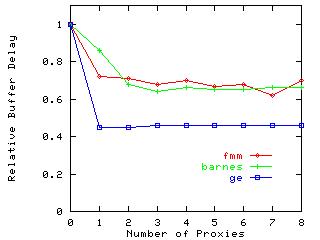

- We have extended the simulator to simulate the proxy protocol, and have

used a variety of benchmarks to evaluate

the new protocol's benefits and costs. Figure 1 illustrates

some preliminary results.

Figure 1. Impact of proxy protocol on memory access queuing delays

Figure 1. Impact of proxy protocol on memory access queuing delays

- In addition to standard benchmarks from the SPLASH-2 suite, and scientific application kernels, we have investigated the multicache performance issues in several locally-developed programs such as a simple relational query package, and a multi-threaded pipelined transaction processor. We are currently parallelising the C4.5 data mining application for inclusion in our benchmark suite.

- We have constructed an analytical performance model for the base protocol simulated by ALITE, and have validated it against simulation experiments using statistical workload models.

- We have developed a revised proxy-based cache coherency protocol

which avoids some problems with the initial design, and

are currently evaluating it.

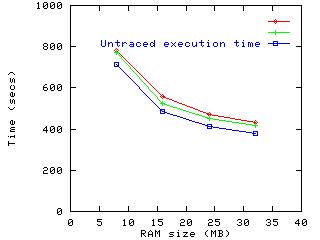

Figure 2. Re-execution of Apache using traces captured from run with 32MB.

Figure 2. Re-execution of Apache using traces captured from run with 32MB.

- To address the difficulties in constructing benchmarks which are representative of commercial workloads, we have developed a novel approach to workload characterisation based on logging each process's interaction with the operating system and with other processes. We have done some initial evaluation using a WWW server as an example, which shows that trace capture leads to a small but manageable slowdown. The resulting traces can often be used to re-execute the application so that the workload behaviour can be studied at leisure, for example under simulation. Figure 2 illustrates the effectiveness of trace re-execution in predicting file system caching performance with varying amounts of memory for the Apache web server.

- We have largely completed all the project milestones planned for the first two years, and much of work planned for the final year.

FUTURE PLANS/EXPLOITATION

Current research: We are currently completing the research phase of the project, revising our designs for mixed-policy cache coherency protocols incorporating randomised data placement, using simulation and analytical modelling to study the resulting behaviour, and investigating new benchmarks.

The results take the form of protocol and policy designs, together with details of their analytical and benchmark performance characteristics. The simulation tools, benchmarks suites and workload capture techniques we are developing also have broader application.

The next step:

- Improve our suite of benchmarks, and characterise them to establish their memory access characteristics.

- Continue our simulation study of the proxy protocol and refinements thereof, in particular looking at the costs, and the issues in implementing it on network architectures (for example to improve locality).

- Validate our analytical performance model, and modify it to capture the enhanced protocols we are developing.

- Investigate further our techniques for studying the performance of processes which interact with each other and with the operating system; this appears to be a critical issue, although it may lie beyond the current project.

Exploitation: The novel protocol techniques we have been developing so far are aimed at large shared-memory configurations. This is an important long-term goal, and is likely to become more so as shared memory techniques are deployed in local area networks. In smaller-scale machines, our tools, analytical modelling work, and experience will be valuable in designing robust and cost-effective systems.

We aim through this project to develop valuable and marketable simulation and benchmarking expertise and tools, and to establish design parameters for hardware and software for large parallel computers.

Publications and Technical Reports What follows is a selection of papers most closely related to the project; please also refer to the investigators' home pages for further work, much of it connected:

- Andrew Bennett, Paul Kelly, Jacob Refstrup and Sarah Talbot

Using Proxies to Reduce Cache Controller Contention in Large Shared-Memory Multiprocessors

In EuroPar'97.

- Sarah A. M. Talbot, Andrew J. Bennett and Paul H. J. Kelly

Cautious, machine-independent performance tuning for

shared-memory multiprocessors

In EuroPar'97.

- Bennett, A.J., Field, A.J. and Harrison, P.G., "Modelling

and Validation of Shared Memory Coherency Protocols", Performance

Evaluation, 1996, Vol. 27 & 28 , 1996, pp. 541-562.

- Kanani, K., Field, A.J. and Harrison, P.G., "Performance Modelling

and Verification of Cache Coherency Protocols using Stochastic Process Algebra",

Proceedings of the 4th Workshop on Process Algebras and Performance Modelling,

1996, to appear.

- Ariel N. Burton and Paul H. J. Kelly. "Lightweight system call tracing for workload characterisation" March 1997.